LLNL’s Computing & Communications portfolio is a gateway for accessing LLNL’s wide variety of solutions and intellectual property for use in information technologies, communications, quantum sciences, data sciences and applied software/modeling & simulations. LLNL’s long history and strong capabilities in computing underpin our success in research, in developing new solutions for our missions, and in our collaborations with the academic and private sectors. We license solutions via diverse mechanisms suited to the use cases, ranging from open-source software licensing, to nonexclusive end user licenses, to custom proprietary licenses for distributors, startups, and other commercialization licensees. We also collaborate with industry partners interested in applying LLNL’s unique capabilities and computing solutions to their company’s challenges.

Portfolio News and Multimedia

In a record setting year for Lawrence Livermore National Laboratory (LLNL), four teams of LLNL researchers will attend the Department of Energy’s (DOE) Energy I-Corps (EIC) Cohort 20 this spring.

The EIC is a key initiative of the DOE’s Office of Technology Commercialization, and facilitated at LLNL by Hannah Farquar from the Innovation and Partnerships Office (IPO). Established in 2015, EIC pairs teams of scientists with industry mentors to train researchers in moving DOE lab-developed technologies toward commercialization.

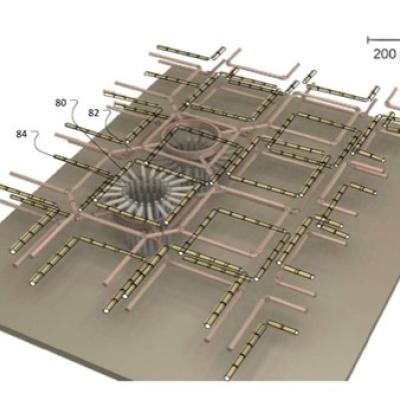

Lawrence Livermore National Laboratory has developed GridDS — an open-source, data-science toolkit for power and data engineers that will provide an integrated energy data storage and augmentation infrastructure, as well as a flexible and comprehensive set of state-of-the-art machine-learning models.

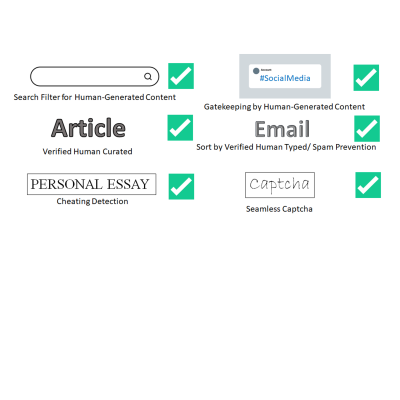

With business applications moving to the cloud from traditional corporate networks, a crucial part of any organization’s cybersecurity is managing the users who can access their computers, networks, software applications and data. LLNL’s One ID technology is a cost-effective way to more easily manage a large organization’s enterprise security.